Sigmoid loss function

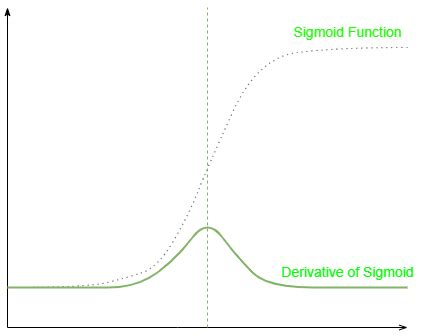

A sigmoid function is a mathematical function having a characteristic "S"-shaped curve or sigmoid curve. A common example of a sigmoid function is the logistic function shown in the first figure and defined by the formula: $${\displaystyle S(x)={\frac {1}{1+e^{-x}}}={\frac {e^{x}}{e^{x}+1}}=1-S(-x).}$$Other … See more A sigmoid function is a bounded, differentiable, real function that is defined for all real input values and has a non-negative derivative at each point and exactly one inflection point. A sigmoid "function" and a … See more • Logistic function f ( x ) = 1 1 + e − x {\displaystyle f(x)={\frac {1}{1+e^{-x}}}} • Hyperbolic tangent (shifted and scaled version of the … See more • Step function • Sign function • Heaviside step function See more • "Fitting of logistic S-curves (sigmoids) to data using SegRegA". Archived from the original on 2024-07-14. See more In general, a sigmoid function is monotonic, and has a first derivative which is bell shaped. Conversely, the integral of any continuous, non-negative, bell-shaped function (with one … See more Many natural processes, such as those of complex system learning curves, exhibit a progression from small beginnings that accelerates and approaches a climax over time. When a … See more • Mitchell, Tom M. (1997). Machine Learning. WCB McGraw–Hill. ISBN 978-0-07-042807-2.. (NB. In particular see "Chapter 4: Artificial … See more Web2 hours ago · Sigmoid Activation Function. 应用于: 分类问题输出层。Sigmoid 函数将任何实数映射到 (0, 1) 的区间内,常用于输出层的二分类问题。它的缺点是在大于 2 或小于 -2 的区间内,梯度接近于 0,导致梯度消失问题。 公式为:

Sigmoid loss function

Did you know?

WebFeb 21, 2024 · Really cross, and full of entropy… In neuronal networks tasked with binary classification, sigmoid activation in the last (output) layer and binary crossentropy (BCE) as the loss function are standard fare. Yet, occasionally one stumbles across statements that this specific combination of last layer-activation and loss may result in numerical … WebMar 12, 2024 · When I work on deep learning classification problems using PyTorch, I know that I need to add a sigmoid activation function at the output layer with Binary Cross-Entropy Loss for binary classifications, or add a (log) softmax function with Negative Log-Likelihood Loss (or just Cross-Entropy Loss instead) for multiclass classification problems.

WebDocument: Experiments have been carried out to predict the future new infection cases in Italy for a period of 5 days and 10 days and in USA for a period of 5 days and 8 days. Data has been collected from Harvard dataverse [15, 16] and [19] . For USA the data collection period is '2024-03-09' to '2024-04-08' and for Italy it is '2024-02-05' to '2024-04-10'. WebFor my problem of multi-label it wouldn't make sense to use softmax of course as each class probability should be independent from the other. So my final layer is just sigmoid units that squash their inputs into a probability range 0..1 for every class. Now I'm not sure what loss function I should use for this.

WebOct 21, 2024 · The binary entropy function is defined as: L ( p) = − p ln ( p) − ( 1 − p) ln ( 1 − p) and by continuity we define p l n ( p) = 0. A closely related formula, the binary cross-entropy, is often used as a loss function in statistics. Say we have a function h ( x i) ∈ [ 0, 1] which makes a prediction about the label y i of the input x i. WebJun 27, 2024 · Sigmoid function produces similar results to step function in that the output is between 0 and 1. The curve crosses 0.5 at z=0 , which we can set up rules for the activation function, such as: If the sigmoid neuron’s output is larger than or equal to 0.5, it outputs 1; if the output is smaller than 0.5, it outputs 0.

WebApr 11, 2024 · Sigmoid activation is the first step in deep learning. It doesn’t take much work to derive the smoothing function either. Sigmoidal curves have “S” shaped Y-axes. The sigmoidal tanh function applies logistic functions to any “S”-form function. (x). The fundamental distinction is that tanh(x) does not lie in the interval [0, 1]. Sigmoid function …

dance moms wizard of ozWebFigure 5.1 The sigmoid function s(z) = 1 1+e z takes a real value and maps it to the range (0;1). It is nearly linear around 0 but outlier values get squashed toward 0 or 1. sigmoid To create a probability, we’ll pass z through the sigmoid function, s(z). The sigmoid function (named because it looks like an s) is also called the logistic func- dance moms you can be anything roblox idWebAug 28, 2024 · When you use sigmoid_cross_entropy_with_logits for a segmentation task you should do something like this: loss = tf.nn.sigmoid_cross_entropy_with_logits (labels=labels, logits=predictions) Where labels is a flattened Tensor of the labels for each pixel, and logits is the flattened Tensor of predictions for each pixel. dance moms tv show viviWebNov 23, 2024 · The sigmoid (*) function is used because it maps the interval [ − ∞, ∞] monotonically onto [ 0, 1], and additionally has some nice mathematical properties that are useful for fitting and interpreting models. It is important that the image is [ 0, 1], because most classification models work by estimating probabilities. dance monkey 10hWebWhat is the Sigmoid Function? A Sigmoid function is a mathematical function which has a characteristic S-shaped curve. There are a number of common sigmoid functions, such as the logistic function, the hyperbolic … bird toys for kidsWebApr 1, 2024 · nn.BCEWithLogitsLoss is actually just cross entropy loss that comes inside a sigmoid function. It may be used in case your model's output layer is not wrapped with sigmoid. Typically used with the raw output of a single output layer neuron. Simply put, your model's output say pred will be a raw value. bird toys for macawsWebOct 14, 2024 · This series aims to explain loss functions of a few widely-used supervised learning models, ... we want to constrain predictions to some values between 0 and 1. That’s why Sigmoid Function is applied on the raw model output and provides the ability to predict with probability. What hypothesis function returns is the probability ... dance moms with asia full episode